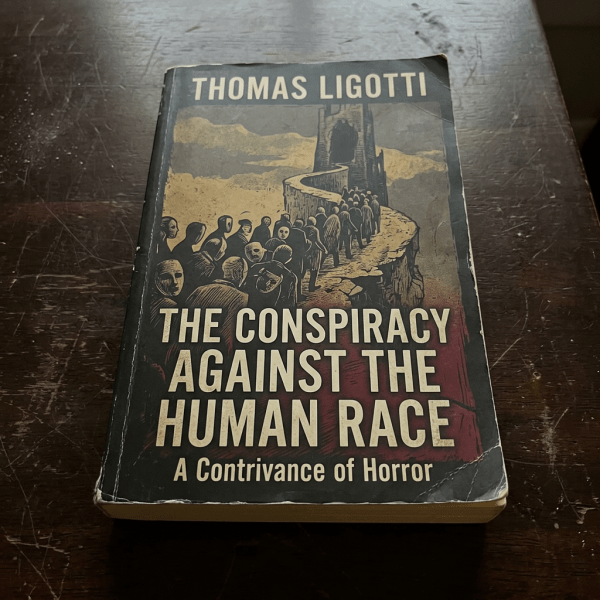

I've been reading Thomas Ligotti quite a bit. Didn't really get his fiction works, though some were quite... atmospheric (which I loved). They tended to be really difficult reading though (the words he uses tend to lie outside my standard vocabulary). More recently I picked up his non-fiction work, The Conspiracy Against the Human Race,... Continue Reading →

Shoot the damn dog

I'm currently reading the book Shoot the Damn Dog: A Memoir of Depression and it's... depressing. I'd thought of reading the book because I wanted to understand depression a little more, as I felt I was a little more susceptible to its callings (for reasons I won't go into here); and with greater understanding, the... Continue Reading →

Above Average

I'm currently reading this book called Say Thank You for Everything. One of the tips that the author gives is: focus on being just slightly better. No need for superstardom. It appealed to me because that's pretty much how I've lived my life: not focusing on being the best, necessarily; just good enough, and where... Continue Reading →

Balance

Reminder to self: leaning in isn't the answer; but nor is quiet quitting. It's finding that balance between the two.

Long Distance Running

I've recently gone back to regular long distance running, having averaged more than 20km per week in the last month, and even had a couple of 90 minute runs. I haven't done this since before the pandemic, so it's a pretty big deal for me. Before the pandemic these would be "medium" runs, and most... Continue Reading →

Proxies

As you may know, I love to type. I believe I did my first online typing test when I was about 15, and since then have been hooked. For the next 20-odd years I've been doing typing tests every once in a while, sometimes much more than once in a while, not necessarily to improve... Continue Reading →

Tackling the Missing Middle of Adoption

As he watched the presentation we were giving him on the machine learning project we were working on, I couldn't but help notice his furrowed brows. I knew him to be a natural sceptic, one who loved asking tough questions that dug deep into the heart of the matter. Though these questions occasionally bordered on... Continue Reading →

When things look easy

I'll start with a quote I read today from the book Getting Ahead (Garfinkle, 2011) about a problem faced by people good at their craft. It made me smile because I this was the first time I'd seen it brought up anywhere and which I thought was one of those things I thought you just... Continue Reading →

On antifragility and new stuff

Taleb once again scores with me with his book on “antifragility”. Like his book on randomness and black swans, this book has opened my mind to a concept that I’ve intuitively felt but never been able to put down in words. I wrote once about “destroying things” to love them more – making new things... Continue Reading →

The Pursuit of Happiness

Eric Fromm, in To Have or To Be We are a society of notoriously unhappy people: lonely, anxious, depressed, destructive, dependent -- people who are glad we have killed the time we are trying so hard to save.