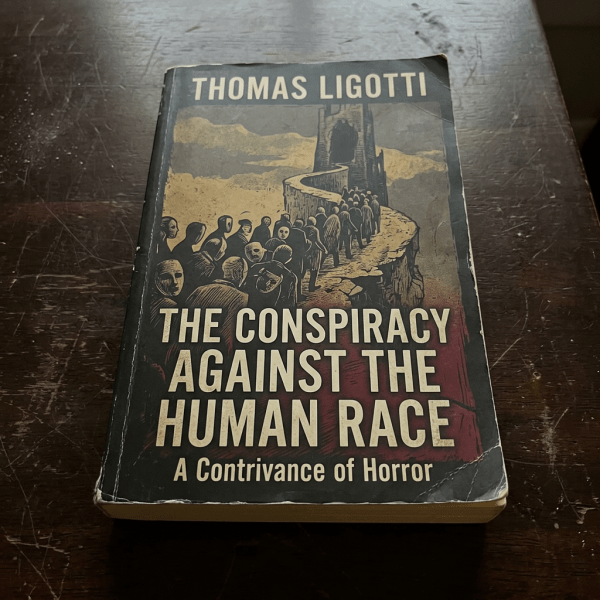

I've been reading Thomas Ligotti quite a bit. Didn't really get his fiction works, though some were quite... atmospheric (which I loved). They tended to be really difficult reading though (the words he uses tend to lie outside my standard vocabulary). More recently I picked up his non-fiction work, The Conspiracy Against the Human Race,... Continue Reading →

The hobbyist internet

I miss the hobbyist internet. The internet before everything became a means to an end. Before everyone was trying to be an expert; to sound smart; to "monetise". Back then we were doing silly things like creating websites to celebrate our favourite things; for me it was FIFA 1996; pictures of lightning (and tornadoes!); and... Continue Reading →

Happy new year

In another life it'd be "happy new year!" but doesn't really feel like it this year. There's something about the whole political and economic climate that feels off. Maybe I'm getting old, no longer as enthusiastic for life, or something. I don't know. But the previous years' feelings of optimism and excitement, feelings of the... Continue Reading →

The Future of Education

As I encouraged/scolded/nagged my kid for the umpteenth time to study for his "听写" (the Chinese equivalent of an English spelling test) I couldn't help but think to myself what a waste of time it was. Don't let the pointlessness of what I thought this exercise really was show, I thought to myself. With my... Continue Reading →

Remember the people

For the past few months I've gotten quite into the groove of using AI for work and life. The more I use it, the more I start seeing the rough edges that, if one isn't careful, can cut unexpectedly into real life. One of those edges I've found is its "lack of humanity". I know,... Continue Reading →

Hello, ether.

It's been such a long time since I last wrote. I suppose I could blame my change of jobs, which has taken up a lot of mental bandwidth. I suppose I could also blame how my writing catharsis leans heavily towards AI/LLMs now (e.g. ChatGPT). Writing tends to be quite fungible for me, scratching the... Continue Reading →

Ritual

For over 20 years I'd used the run/walk running method I learned from a book by Jeff Galloway. It'd kept me injury-free (the one time I got a bad case of plantar fasciitis was when I stopped run/walking), and has been something I've preached to anyone and everyone who would listen. Then six months ago... Continue Reading →

My Foray into AI

This past month or so has been really exciting for me. Among other things, one of the most exciting has definitely been my dabbling with (and then diving head first) into Generative AI (GenAI), or more specifically Large Language Models (or LLMs, which you may know as "ChatGPT" or "Gemini"). I've been avoiding GenAI for... Continue Reading →

The Ship of Theseus and the Uncanny Valley

Bit by bit it was replaced, slowly but surely. What was black was now red; what was left was now right. (And what was right had now left.) It reminded me of the Ship of Theseus: were we still us, with all that had been changed? Unnoticed at first, something felt... off. But finger on... Continue Reading →

Tired from Running

So I'm back from a run and I'm feeling tired. I asked myself: how long more? I asked myself: what's the end goal? I asked myself: and then what? No answers. But a thought: man this is just like life, we just keep going and going, waiting for... Then another thought: I swear I wrote... Continue Reading →