I once wrote about one of the dangers of machine learning algorithms (e.g. the thing that powers the rules behind which many decisions are made in the real world): the closed feedback loop.

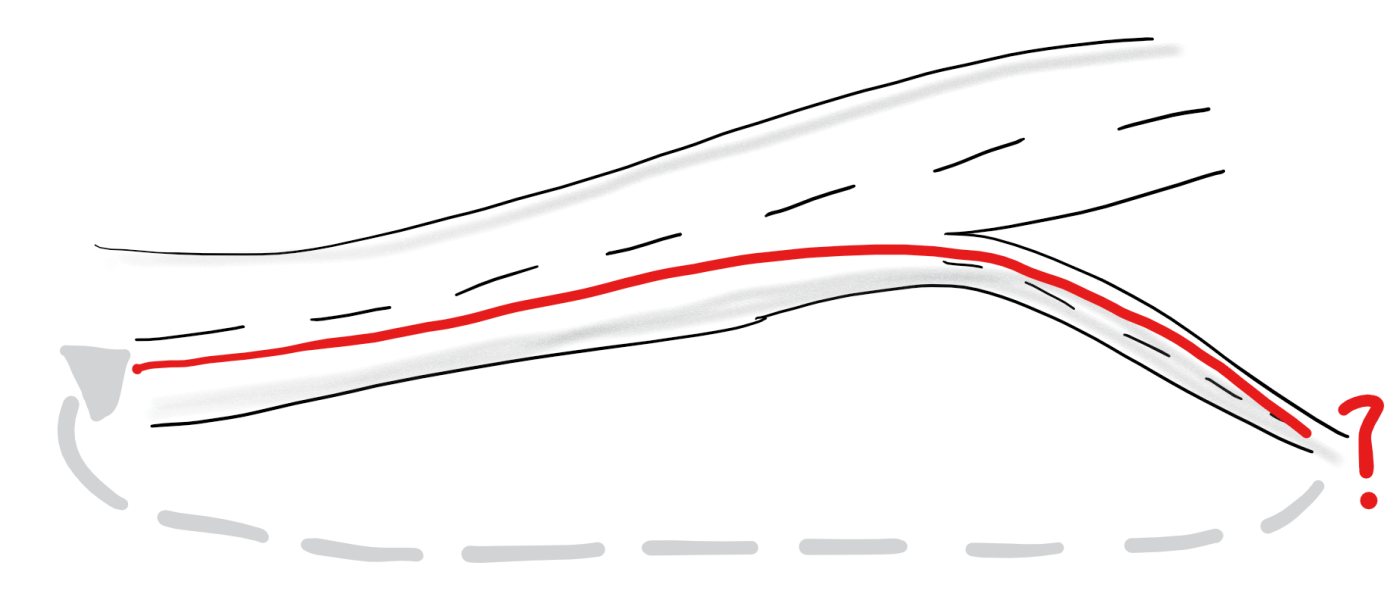

An algorithm that falls into one of these closed feedback loops starts to lose its ability to learn from more data, since the future that it “predicts” is based on an outcome of the past, which it deeply influences. In other words, it becomes self-fulfilling. If the only people whom I talk to are people like myself because I think good outcomes come only from talking to people like myself, I’m never going to learn that talking to people unlike myself may also bring good outcomes.

One possible way out? Random mutation, which is a key part of what we know works in the natural world: evolution.

– Emphasis mine, read full post on Nature.com here: Mutations are the Raw Materials of Evolution

Mutations are essential to evolution. Every genetic feature in every organism was, initially, the result of a mutation. The new genetic variant (allele) spreads via reproduction, and differential reproduction is a defining aspect of evolution. It is easy to understand how a mutation that allows an organism to feed, grow or reproduce more effectively could cause the mutant allele to become more abundant over time. Soon the population may be quite ecologically and/or physiologically different from the original population that lacked the adaptation.

So just how does one apply random mutation to algorithms? I came across an article via Slashdot today that seems to suggest a possible (and quite clever) solution to the problem: introducing uncertainty into the algorithms. Where previously it would have been a very straightforward if A (input) then B (output) scenario, we now have a if A then most likely B but maybe C or D.

This seems to be aligned to how nature and evolution works (i.e. through random mutations), which having recently read Ray Dalio’s principles, reminds me very much of principle 1.4, and in particular 1.4 (b):

1.4 Look to nature to learn how reality works.

b. To be “good,” something must operate consistently with the laws of reality and contribute to the evolution of the whole

– Recommended: see all the Principles in summary

How might this work in the real world?

Imagine a scenario where somebody writes an algorithm for credit worthiness for bank loans. When the algorithm’s built, some of the attributes that the algorithm thinks are important indicators of credit worthiness may include things like age, income, bank deposit amount, gender, and party affiliation (in Singapore we might have something like the ruling vs. opposition party).

Without uncertainty built in, what would happen is that people of a certain characteristic would tend to always have an easier time obtaining home loans. So let’s say older, high income earners with large bank deposits who are male and prefer the ruling political party are considered more credit-worthy.

Because this is the only group that is getting most (or all) of the loans, we will get more and more data on this group, and the algorithm will be better able to more accurately predict within this group (i.e. the older higher income earners etc.)

Younger, lower income candidates who have smaller bank deposits, and are female and prefer the opposition party (i.e. the opposites of all the traits the algorithm thinks makes a person credit-worthy) would never get loans. And without them getting loans, we would never have more data on them, and would never be able to know if their credit worthiness was as poor as originally thought.

What is more, as times change and circumstances evolve many of these rules become outdated and simply wrong. What may have started out as a decent profiling would soon grow outdated and strongly biased, hurting both loan candidates and the bank.

What the introduction of uncertainty in the algorithms would do is to, every once in a while, “go rogue” and take a chance. For example, every 10th candidate the algorithm might take a chance on profile that’s completely random.

If that random profile happens to be a young person with low income, but who eventually turns out to be credit-worthy, the algorithms now knows that the credit-worthiness of the young and low income may be better than actually thought, and the probabilities could be adjusted based on these facts.

What this may also do is to increase the number of younger, lower income earners who eventually make it past the algorithms and into the hands of real people, giving the algorithms even more information to refine their probabilities.

Seems to me to be a pretty important step forward for algorithm design and implementation, and making them, funnily enough, more human.

Leave a comment